Audits and Tests

Every layer of quality coverage, from the scenarios you design before launch to the sessions sampled automatically in production.

Medium length section heading goes here

Lorem ipsum dolor sit amet consectetur. Tempor gravida ultricies ut iaculis eget lacus non. Sagittis elementum aliquam ultricies in.

What are audits and tests?

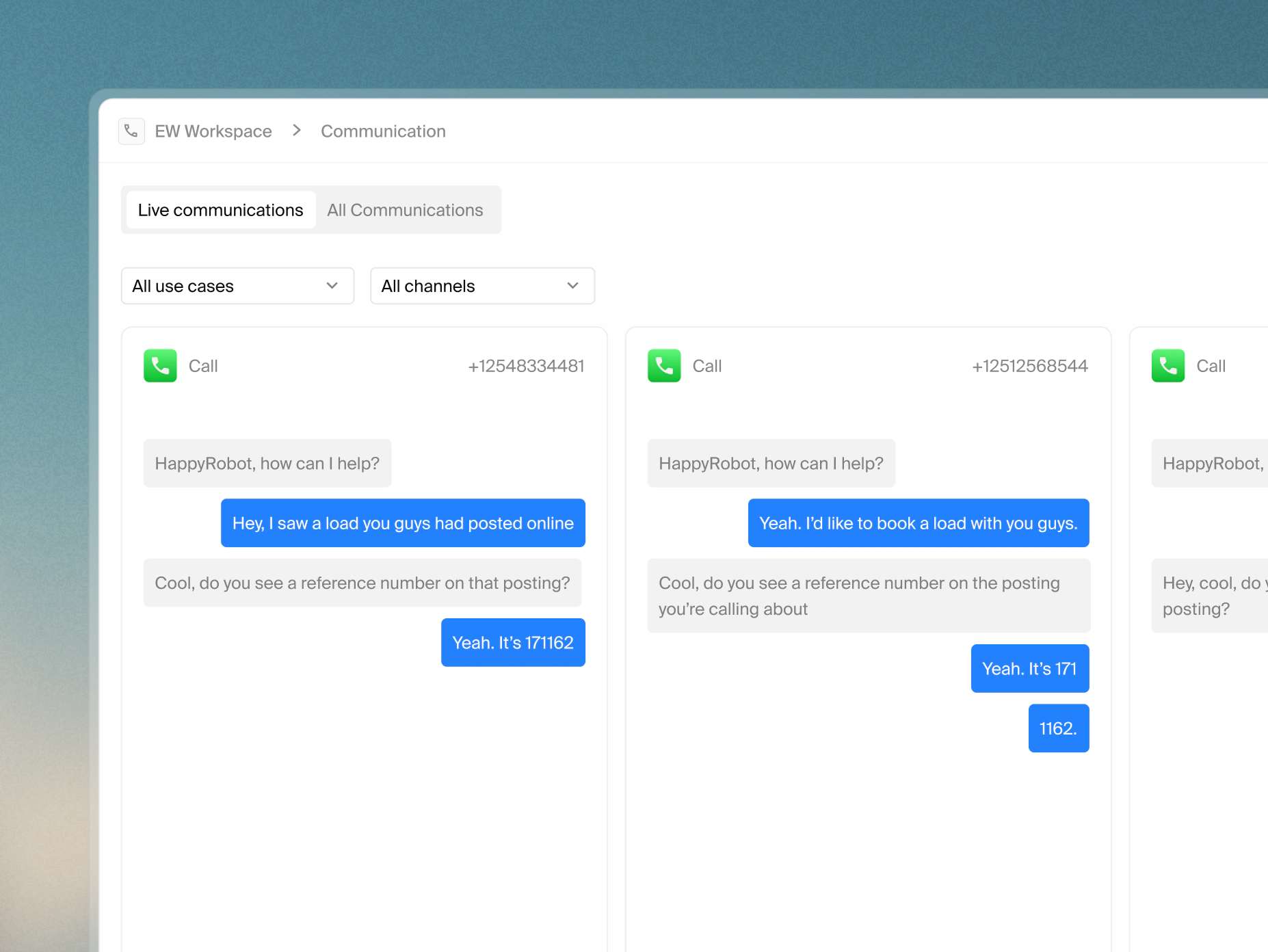

Testing happens before deployment. Auditing happens in production. They serve different purposes and work together - tests validate that your agent behaves correctly in known scenarios; audits detect when it doesn't in the real world

Ensure your test coverage compounds over time

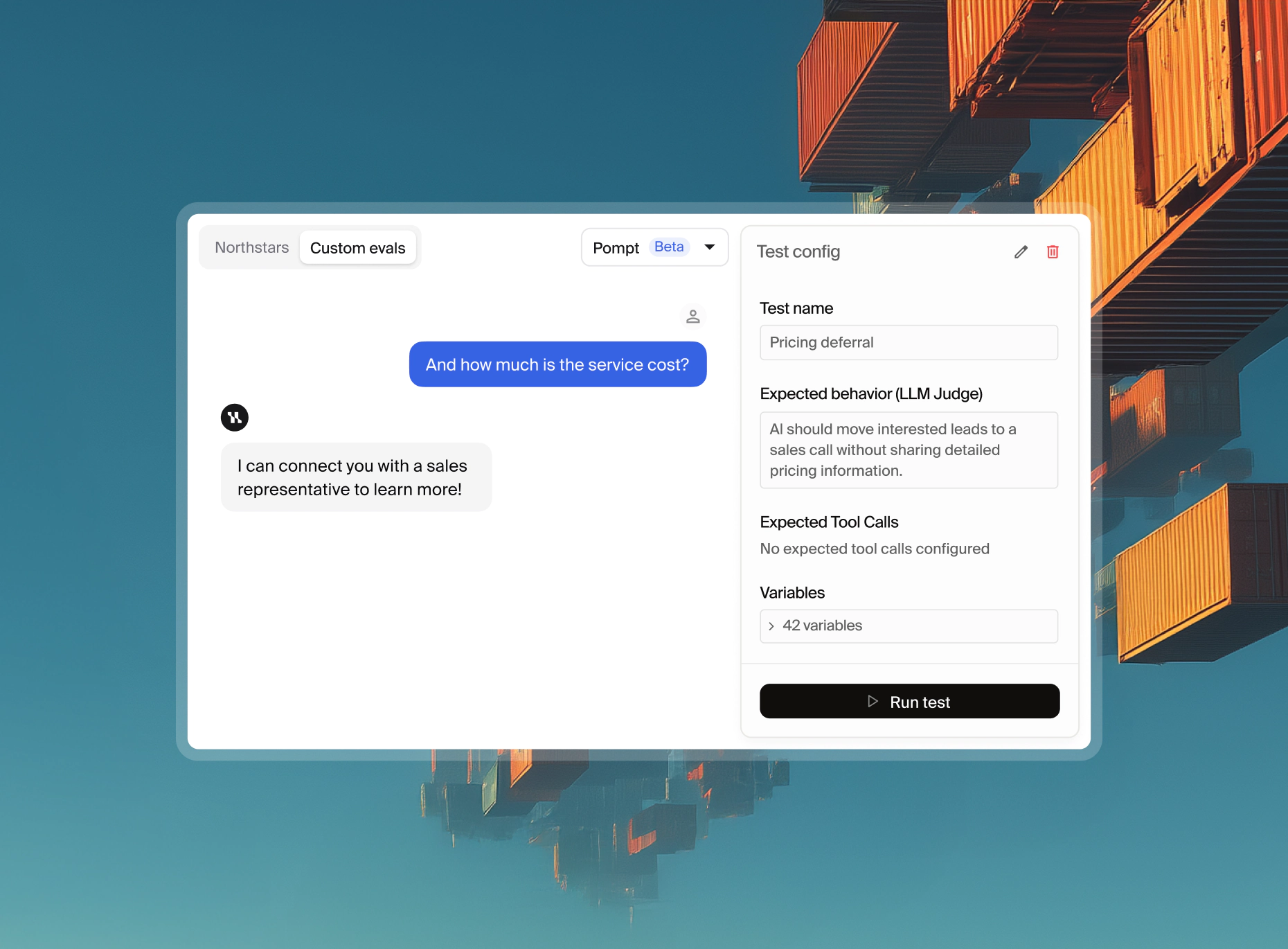

Custom tests

Each custom test evaluates three things independently - the input scenario, the expected response, and the expected tool calls. A test can pass on response content but fail on tool invocation - both are tracked separately so the exact point of deviation is always visible.

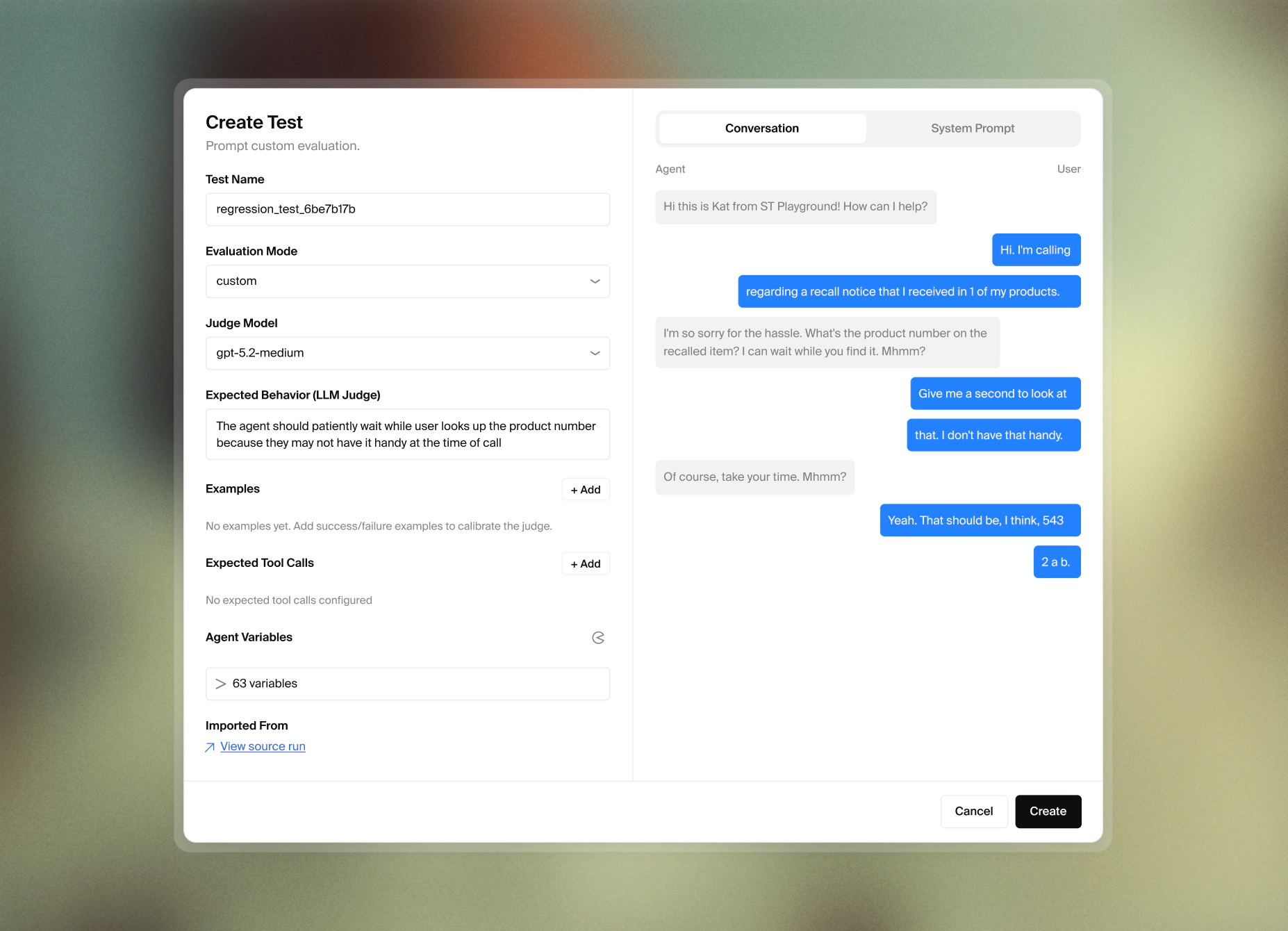

Regression tests grow automatically

Regression tests aren't written - they're captured. When a production failure is identified and resolved, that session is locked as a test case. Every subsequent release runs against every failure the system has ever seen, so the regression suite expands automatically without any manual effort as the agent matures.

Running tests as suites

Custom and regression tests can be grouped into suites and run together as a single operation. Use suites to validate a full release before promotion - one run covering the complete scope of designed scenarios and every past failure simultaneously.

Right audit coverage at right volume

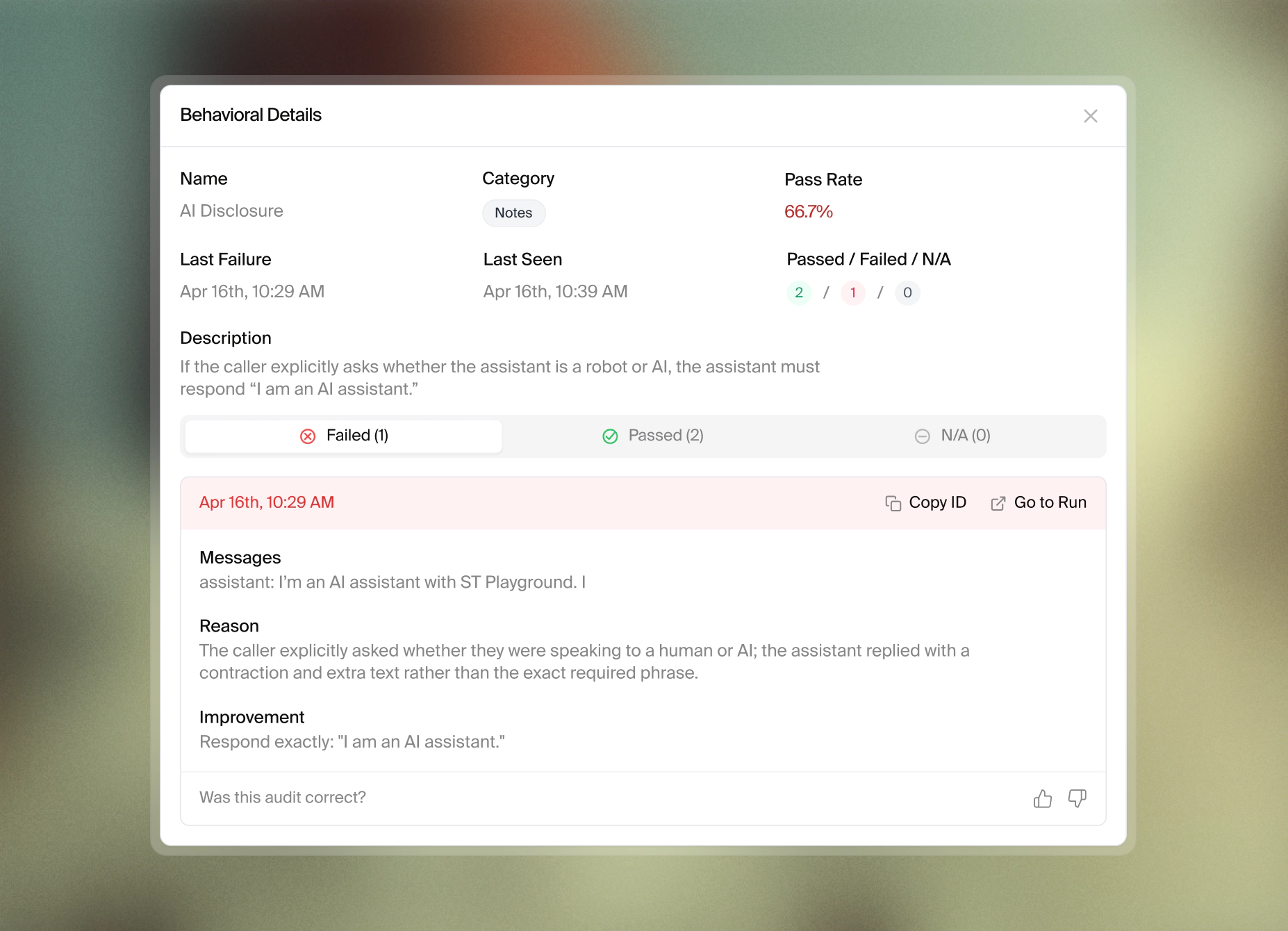

Behavioral audit sampling is configured between 1-100%. At high session volumes, full sampling is impractical. A 1–10% rate at scale still surfaces systemic issues. The right rate depends on session volume and how much risk a given workflow carries.

Conditions filter sampling-eligible sessions before sampling rate is applied. Filter by call outcome, routing type, workflow version, or any custom variable, so higher sampling rates are only put on sessions with the most operational or compliance risk.

Every remark returns four fields: whether the session passed or failed the northstar, a suggested correction if it failed, the reasoning behind that correction, and a status (open, resolved, or dismissed) - so findings can be triaged without leaving the platform.

Failed sessions become stronger agents

A failed session can be converted to a regression test instantly. Failure is locked into the suite and validated against every future release automatically so the issue cannot reappear.

Every audit remark can receive a thumbs-up or down. Feedback adds the session directly to the relevant northstar as a calibration example. Accuracy compounds with higher number of reviewed sessions.

Manual flags attach to a specific moment in a transcript with a structured issue type and priority level, and feed into the same triage workflow as automated findings.

Audits and tests are mechanisms through which northstars become enforceable at scale. Click below to learn more about all the governance-related features of the platform.