Proprietary voice stack for human-like conversations

A purpose-built voice pipeline engineered for the unpredictability of real enterprise calls

Medium length section heading goes here

Lorem ipsum dolor sit amet consectetur. Tempor gravida ultricies ut iaculis eget lacus non. Sagittis elementum aliquam ultricies in.

Why HappyRobot's voice AI is different

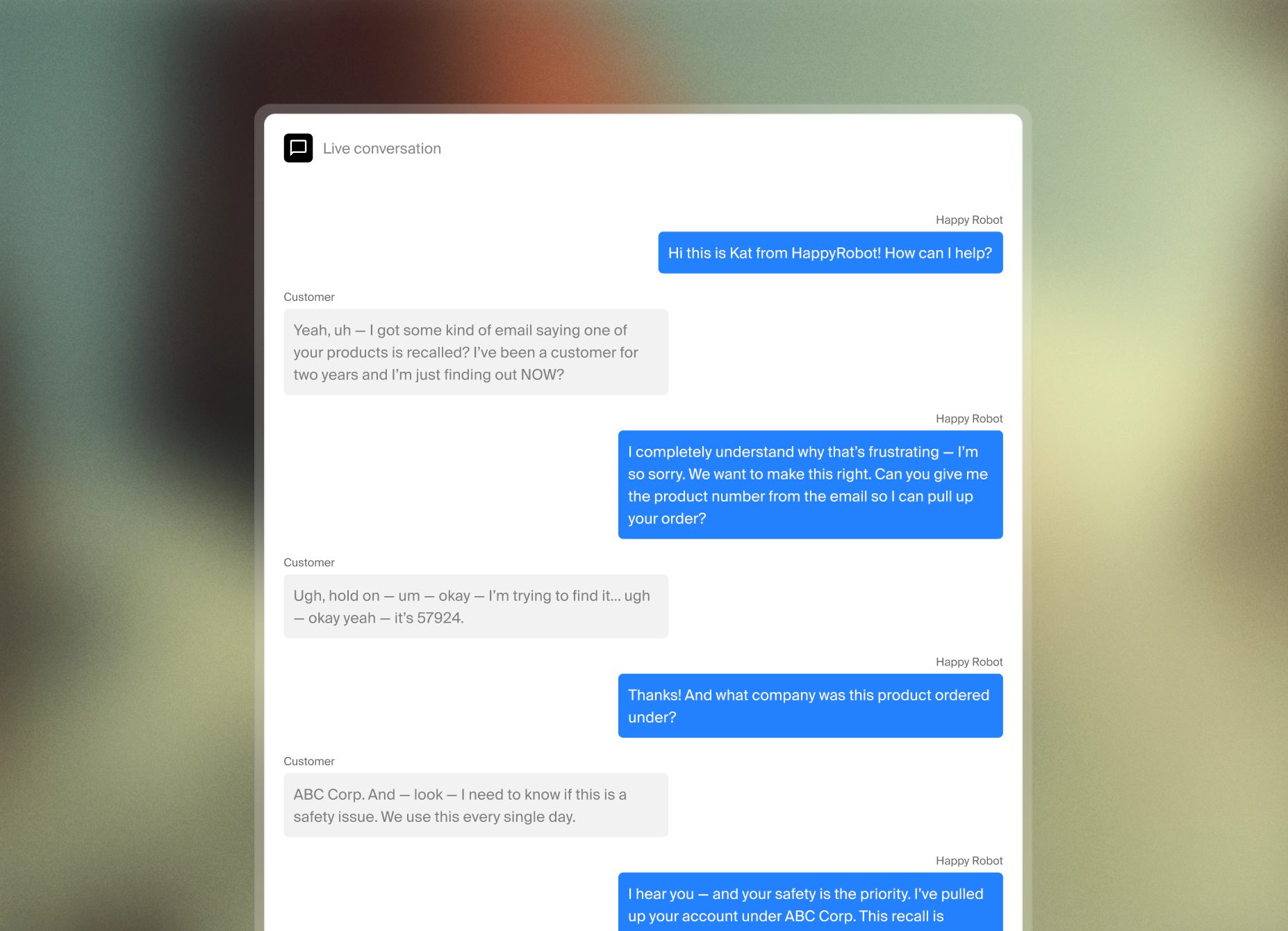

Most voice AI is built for controlled conditions. Enterprise telephony isn't controlled - callers have accents, background noise, domain-specific terminology, and no patience for robotic responses or awkward pauses. HappyRobot's voice pipeline is a 10-model stack where every component is fine-tuned specifically for live telephony at enterprise scale, not adapted from a general-purpose consumer product.

Back-and-forth of a real call without breaking flow

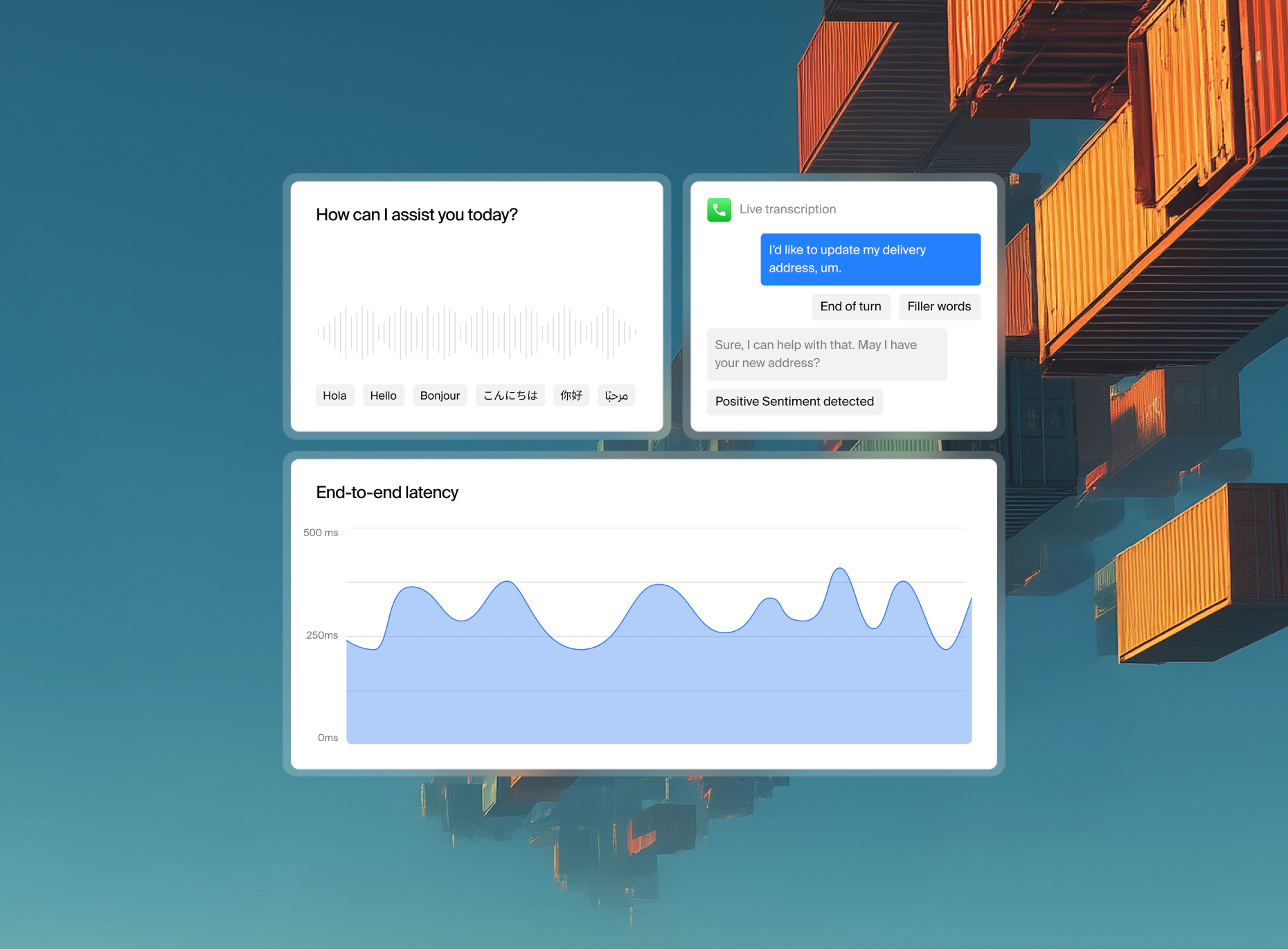

Knowing when a caller has finished speaking versus pausing mid-thought, searching for a word, or trailing off, is a hard problem in voice AI. A dedicated end-of-turn model makes this determination independently of the transcription model, so the agent doesn't cut in too early or wait too long. The result is a conversational rhythm that feels natural rather than mechanical.

Not every sound mid-call is an interruption. A caller saying "um" or "uh" mid-sentence is thinking, not handing over the floor. A dedicated model distinguishes fillers from genuine interruptions so the agent responds to real conversational signals rather than reacting to every noise.

Background noise, hold music, and ambient sound in call center environments can all trigger false positives in basic voice detection systems. The VAD model is trained to isolate actual speech from everything else that happens on a live call, keeping the pipeline focused on what the caller is actually saying.

Accuracy on words that matter the most - everything that gets mangled in generic models

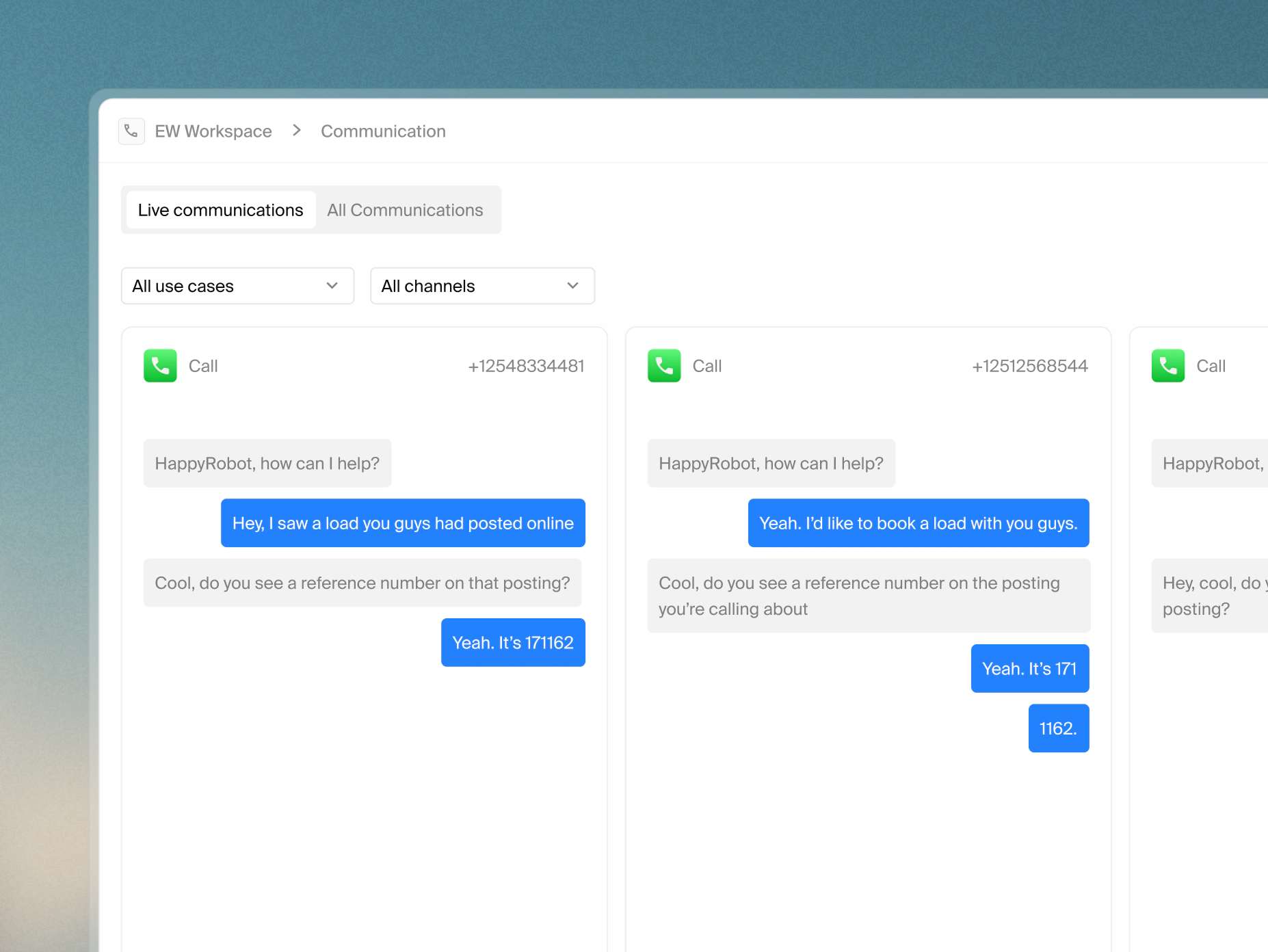

Multi-provider transcription with automatic failover

Parallel transcription providers run simultaneously with automatic failover - if one degrades for any reason, the pipeline switches instantly without the caller noticing or the conversation breaking.

Numbers and reference data accuracy

Generic models routinely mishear sequences of digits contained in order numbers, tracking IDs, phone numbers, account references. The transcription stack is specifically tuned for numeric accuracy in enterprise contexts where a single misheard digit breaks a downstream lookup.

Keyword boosting

Domain-specific terms like product codes, carrier names, industry jargon are systematically underperformed by general transcription models. Keyword boosting primes the transcription layer for the specific vocabulary your operation uses, so the terms that matter most to your workflows are the ones captured most accurately.

How the agent sounds and why that matters for enterprise calls

Fine-tuned Text-to-Speech (TTS) for the real world

Text-to-speech in a live call environment has different requirements than a podcast or a smart speaker. Pronunciation of numbers, dates, abbreviations, and domain-specific terms needs to be consistent and correct. Our TTS model is fine-tuned for telephony delivery, not adapted from a general-purpose voice synthesis product.

Voice matching across providers

When a provider switch happens mid-call due to failover or routing, voice characteristics are preserved so the caller doesn't notice a change in how the agent sounds. Consistency is maintained at the persona level, not just the text level.

30+ languages with matched voice quality

Multilingual support isn't just translation. Each language deployment maintains the same voice quality, latency profile, and conversation model behavior as the primary language. Callers in any supported language get the same experience, not a degraded fallback.

Latency

In live voice, latency isn't a metric, it's something callers feel. Every component in the pipeline - transcription, LLM reasoning, TTS synthesis, and network transit is independently optimized and individually measured. End-to-end latency is monitored per component in production so degradation is traced to its source immediately rather than surfaced as a general slowdown.

Voice AI is one part of broader HappyRobot agent architecture. Click below to learn more about how HappyRobot agents are built and deployed.